Introduction

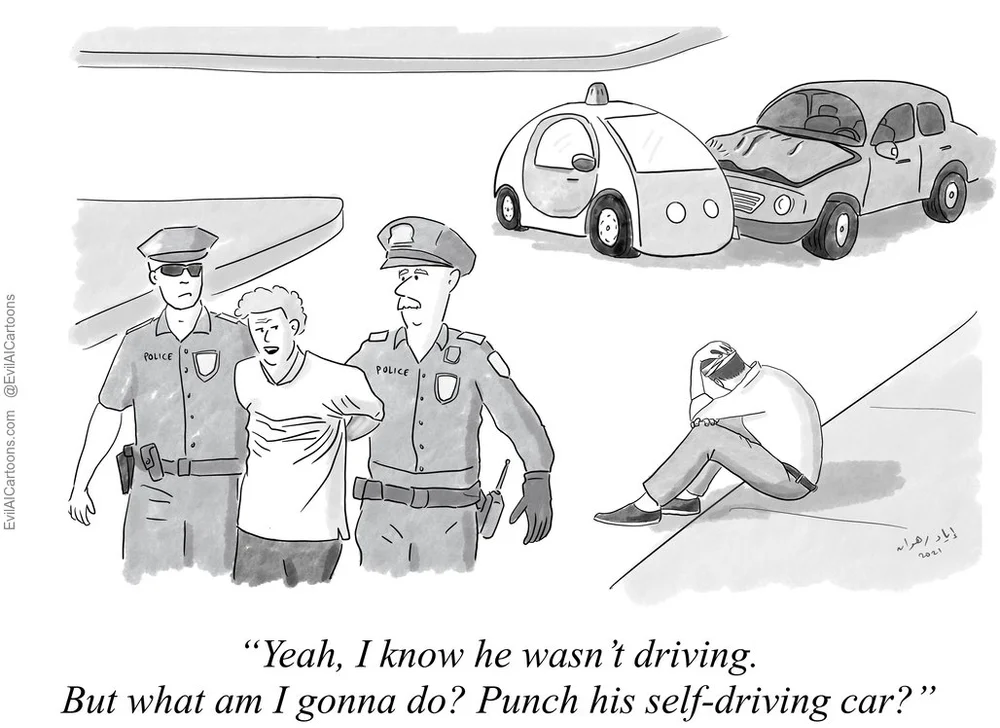

Do you think it is a right to have a meaningful job such as a taxi driver even though driverless cars are safer than human-driven cars? What about the doctor diagnosing cancer, should he/she have that right as well, even though machine learning can do a better job?

Learning objectives for ChapterBy the end of the chapter, participants will be able to:- reflect on requirements for AI systems to be ethical

- evaluate their own values

- make choices and formulate arguments relevant to this.

Human agency and oversight

The principle of respect for human autonomy says that AI systems should help people make their own decisions and be able to make their own choices. This requires that AI systems should act both as enablers to a democratic, flourishing, and just society by supporting the user’s agency fostering fundamental rights, and allow for human oversight.

AI systems, like many other technologies, can both help and hurt people's basic rights. They can help people, for example, by making it easier for them to keep track of their own data, or by making education more accessible, which supports their right to education.

It can also be bad for fundamental rights because AI systems are so big and powerful. In situations where there are threats to fundamental rights, an impact assessment should be conducted. This should be carried out before the system is built, and it should identify whether the risks can be reduced or justified in a democratic society so that people's rights and freedoms are not violated. Furthermore, there should be ways to glean feedback from people who don't work with AI systems to ensure that people's basic rights are protected.

Users should be able to make informed autonomous decisions regarding AI systems. They should be given the knowledge and tools to understand and interact with AI systems to a satisfactory degree and, where possible, be enabled to reasonably self-assess or challenge the system. AI systems should support individuals in making better, more informed choices in accordance with their goals. AI systems can sometimes be deployed to shape and influence human behaviour through mechanisms that may be difficult to detect. They may harness sub-conscious processes, including various forms of unfair manipulation, deception, herding and conditioning, all of which may threaten individual autonomy. The overall principle of user autonomy must be central to the system’s functionality. Key to this is the right not to be subject to a decision based solely on automated processing when this has legal implications for users or similarly significantly affects them.

https://www.evilaicartoons.com/archive/do-not-use-humans-as-scapegoats

Humans are in charge of everything that happens. Human supervision helps to make sure that an AI system doesn't take away people's autonomy or do anything else bad. Human-in-the-loop (HITL), human-on-the-loop (HOTL), or human-in-command (HIC) governance methods can be used to oversee and monitor such occurrences.

HITL refers to when it is not possible or desirable for humans to be involved in every decision that the system makes.

HOTL refers to the ability for humans to be involved in the design of the system and to monitor how the system is working.

HIC is the ability to oversee the AI system's bigger economic, social, legal, and ethical effects, as well as the ability to decide when and how to use the system in any given situation.In certain situations, it can be better not to use an AI system, or to set limits on how humans can use the system. Similarly it may be appropriate to ensure that humans can override a system's decision.

Furthermore, it is necessary to ensure that public enforcers can carry out their duties in line with their mandate. Depending on the AI system's use and risk level, oversight mechanisms are required in a variety of ways to support other safety and control measures. All other things being equal, the less control a human has over an AI system, the more testing and stricter rules that must be put in place.

Societal and environmental wellbeing

Following the principles of fairness and preventing harm, the wider community and all other living things should also be considered as key stakeholders in the AI system's life cycle. AI systems should be encouraged to be environmentally and sustainably responsible, and research should be encouraged into AI solutions that help solve problems around the world, such as the UN's Sustainable Development Goals. Ideally, AI systems should be used to help all humans, even future generations.

AI systems may be able to help solve some of the most important problems in society, but they must be used in the most environmentally-friendly way possible. The system's development, deployment, and use, as well as its entire supply chain, should be regarded in this way. Considerable resources and energy are used during any operational activity within an organisation and less harmful options should be a matter for attention. As such, measures that make AI systems and their associated supply chains more environmentally friendly should be actively encouraged.

https://www.youtube.com/watch?embed=no&v=mvUPj2WbuKc

Social AI systems may change how we think about social agency, or how we think about our social relationships and attachments, if we are constantly exposed to them in all areas of our lives. AI systems can help people improve their social skills, but they can also make them worse and this could impact negatively on people's physical and mental health. The effects of these systems must therefore be carefully monitored and examined.

https://www.youtube.com/watch?embed=no&v=LmNUtG6KxJM

It is also important to think about how AI will affect institutions, democracy, and society as a whole. AI systems should be carefully deliberated when they are used in situations that involve the democratic process, such as when politicians make decisions and when people vote.