Introduction

Do you think it is a right to have a meaningful job such as a taxi driver even though driverless cars are safer than human-driven cars? What about the doctor diagnosing cancer, should he/she have that right as well, even though machine learning can do a better job?

Learning objectives for ChapterBy the end of the chapter, participants will be able to:- reflect on requirements for AI systems to be ethical

- evaluate their own values

- make choices and formulate arguments relevant to this.

Topic outline

Human agency and oversight

The principle of respect for human autonomy says that AI systems should help people make their own decisions and be able to make their own choices. This requires that AI systems should act both as enablers to a democratic, flourishing, and just society by supporting the user’s agency fostering fundamental rights, and allow for human oversight.

AI systems, like many other technologies, can both help and hurt people's basic rights. They can help people, for example, by making it easier for them to keep track of their own data, or by making education more accessible, which supports their right to education.

It can also be bad for fundamental rights because AI systems are so big and powerful. In situations where there are threats to fundamental rights, an impact assessment should be conducted. This should be carried out before the system is built, and it should identify whether the risks can be reduced or justified in a democratic society so that people's rights and freedoms are not violated. Furthermore, there should be ways to glean feedback from people who don't work with AI systems to ensure that people's basic rights are protected.

Users should be able to make informed autonomous decisions regarding AI systems. They should be given the knowledge and tools to understand and interact with AI systems to a satisfactory degree and, where possible, be enabled to reasonably self-assess or challenge the system. AI systems should support individuals in making better, more informed choices in accordance with their goals. AI systems can sometimes be deployed to shape and influence human behaviour through mechanisms that may be difficult to detect. They may harness sub-conscious processes, including various forms of unfair manipulation, deception, herding and conditioning, all of which may threaten individual autonomy. The overall principle of user autonomy must be central to the system’s functionality. Key to this is the right not to be subject to a decision based solely on automated processing when this has legal implications for users or similarly significantly affects them.

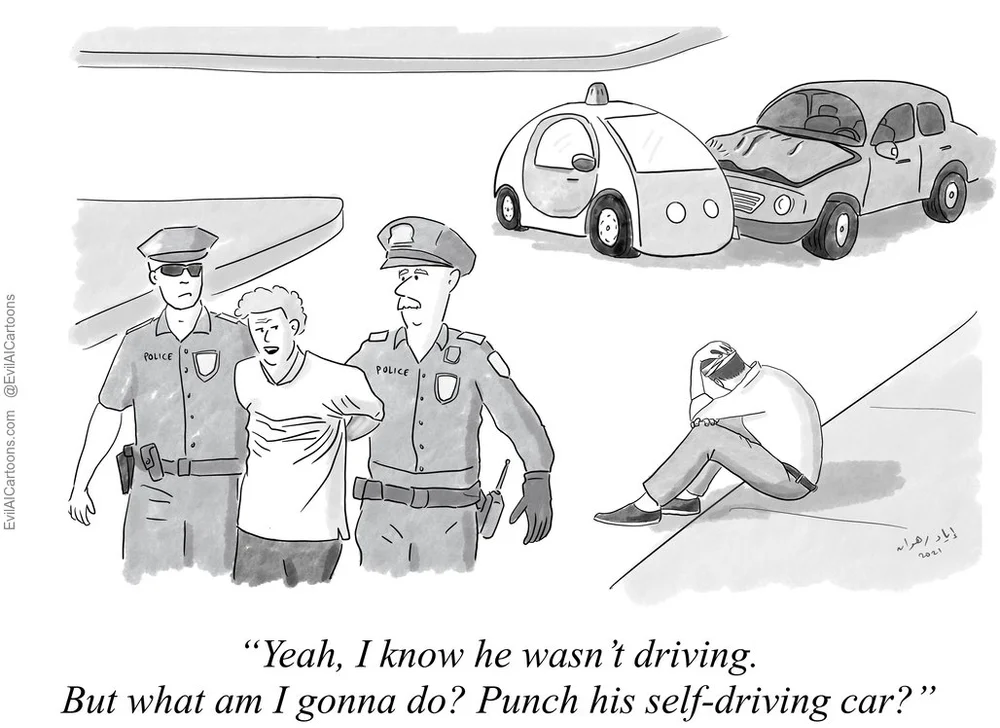

https://www.evilaicartoons.com/archive/do-not-use-humans-as-scapegoats

Humans are in charge of everything that happens. Human supervision helps to make sure that an AI system doesn't take away people's autonomy or do anything else bad. Human-in-the-loop (HITL), human-on-the-loop (HOTL), or human-in-command (HIC) governance methods can be used to oversee and monitor such occurrences.

HITL refers to when it is not possible or desirable for humans to be involved in every decision that the system makes.

HOTL refers to the ability for humans to be involved in the design of the system and to monitor how the system is working.

HIC is the ability to oversee the AI system's bigger economic, social, legal, and ethical effects, as well as the ability to decide when and how to use the system in any given situation.In certain situations, it can be better not to use an AI system, or to set limits on how humans can use the system. Similarly it may be appropriate to ensure that humans can override a system's decision.

Furthermore, it is necessary to ensure that public enforcers can carry out their duties in line with their mandate. Depending on the AI system's use and risk level, oversight mechanisms are required in a variety of ways to support other safety and control measures. All other things being equal, the less control a human has over an AI system, the more testing and stricter rules that must be put in place.

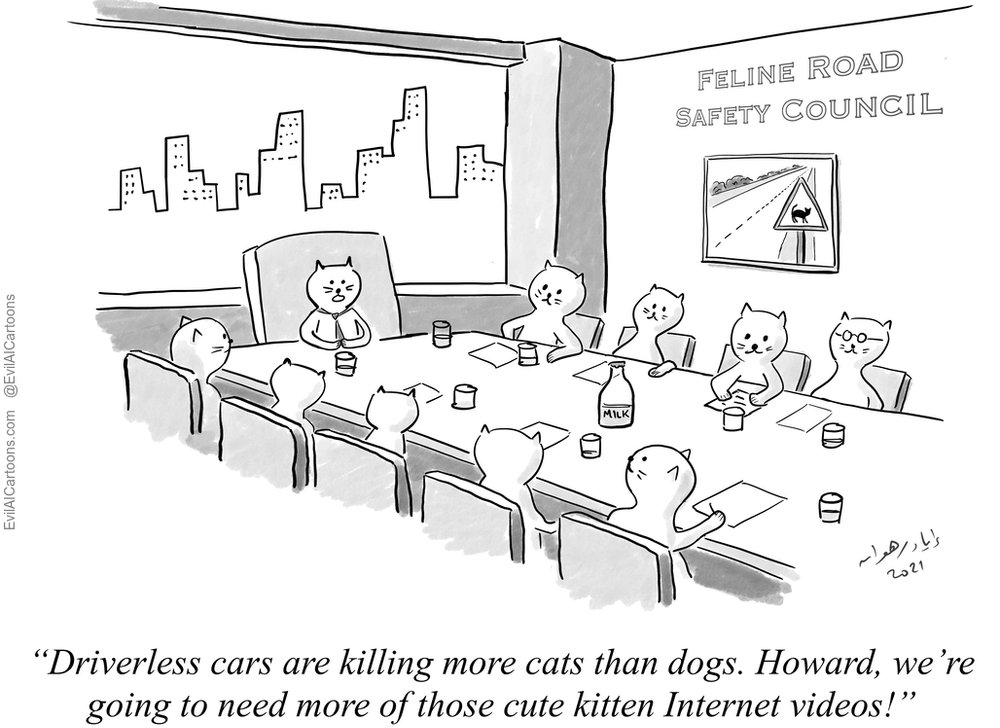

Societal and environmental wellbeing

Following the principles of fairness and preventing harm, the wider community and all other living things should also be considered as key stakeholders in the AI system's life cycle. AI systems should be encouraged to be environmentally and sustainably responsible, and research should be encouraged into AI solutions that help solve problems around the world, such as the UN's Sustainable Development Goals. Ideally, AI systems should be used to help all humans, even future generations.

AI systems may be able to help solve some of the most important problems in society, but they must be used in the most environmentally-friendly way possible. The system's development, deployment, and use, as well as its entire supply chain, should be regarded in this way. Considerable resources and energy are used during any operational activity within an organisation and less harmful options should be a matter for attention. As such, measures that make AI systems and their associated supply chains more environmentally friendly should be actively encouraged.

https://www.youtube.com/watch?embed=no&v=mvUPj2WbuKc

Social AI systems may change how we think about social agency, or how we think about our social relationships and attachments, if we are constantly exposed to them in all areas of our lives. AI systems can help people improve their social skills, but they can also make them worse and this could impact negatively on people's physical and mental health. The effects of these systems must therefore be carefully monitored and examined.

https://www.youtube.com/watch?embed=no&v=LmNUtG6KxJM

It is also important to think about how AI will affect institutions, democracy, and society as a whole. AI systems should be carefully deliberated when they are used in situations that involve the democratic process, such as when politicians make decisions and when people vote.

Technical robustness is an important part of creating an AI system that is trustworthy. This is closely linked to the principle of preventing harm. This means that AI systems must be built with a proactive approach to risks, and in a way that ensures that they do what they're supposed to do whilst minimizing unintentional and unexpected harm, and preventing unacceptable harm. Also be taken into account are changes in their operating environment, as well as the presence of other agents (human and artificial) that may interact with the system in an unfriendly way. In addition, the physical and mental well-being of humans should be considered.

Back in the 1940's, the science fiction author Isaac Asimov wrote his Three Laws of Robotics:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Resilience to attack and security is an important consideration. Like all software systems, AI systems should be protected from flaws that can be exploited by hackers. Hackers may try to access the data (data poisoning) on the model (model leakage), or on the infrastructure, which includes both software and hardware.

The data and the behaviour of an AI system can be changed if it is attacked, for example, in adversarial attacks. This can make the system make different decisions, or it can shut it down all together.

Systems and data can also be damaged or tainted by humans who want to harm them or by exposing the hardware to entities that are dangerous. Insufficient security procedures can lead to bad decisions or even physical harm. In order for AI systems to be considered safe, human decision-makers need to consider possible unintended uses of the AI system (e.g. dual-use applications) and how the system could be used by ill-intentioned individuals. Steps should be taken to prevent and mitigate against these risks.

https://www.youtube.com/watch?embed=no&v=BNwWRwJ7XSA&t=15

A fallback plan should be available if something goes wrong with an AI system. Actions may include switching the system from a statistical procedure to one that follows rules, or it may ask for a human operator to intervene before proceeding further with its work. It crucial that the system will do what it is designed and intended to do without hurting living things or the world around them. This includes ensuring that there are not any unintended consequences or mistakes.

In addition, processes should be created to help people understand and assess the risks of using AI systems in a wide range of applications. The level of safety measures that are needed is determined by both the level of risk posed by an AI system and how well it can do its job. When it can be foreseen that the development process or the system itself has multiple risks, it is important to develop and test safety measures early on.

Accuracy refers to an AI system's ability to make the right decisions, for example, by correctly classifying information into the relevant groups, or by making the appropriate predictions, recommendations, or assessments based on data or models. An explicit and well-formed development and evaluation process can help mitigate and correct risks.. When the system can not avoid making some incorrect predictions, it is important that it can show how likely these mistakes are to happen. In situations where the AI system has an impact on people's lives, a high level of accuracy is especially important.

In order for AI systems to work well, they need to be able to produce the same results over and over again. A reliable AI system should be able to handle many differing types of inputs and situations. Reproducibility refers to whether an AI experiment behaves the same way when it performs again under the same circumstances. Replication files can make it easier to test and reproduce behaviour when the system is used.

Privacy and data governance

Privacy is closely linked to the idea of preventing harm and it is a fundamental right that AI systems can impact on. The quality and integrity of the data, the relevance of the data to the domain where the AI systems will be used, the access protocols, and how the data will be processed are important to ensuring privacy.

AI systems must protect people's privacy and data all the way through a system's lifecycle and this is referred to as "governance". When a person interacts with an AI system, information is created about them over time. This includes information provided by them when they first start using the system, as well as information that the AI system creates about them as they interact with it. AI systems may be able to determine not only what an individual likes or enjoys, but also their sexual orientation, age, gender, religious or political views, based on digital records of their behaviour. Because people need to be able to trust the data gathering process, it must be assured that the information that is collected about them will not be used to discriminate against them in any way.

https://www.youtube.com/watch?embed=no&v=1bhpWEMZ6XA

The quality of the data used in AI systems is fundamental to how well they work. When data is gathered, it may have socially-created biases, inaccuracies, errors, and mistakes that people make. This requires attention before any data set can be used. In addition, the data must be checked to make sure they are correct. Feeding malicious data, or data that is not 'cleaned up' into an AI system may make it act in a different way, especially if the system is self-learning.

There must be a lot of testing and documentation at each step, from planning to training to testing to deployment. This should also apply to AI systems that were not built in-house but were bought from an external party.

Whether someone is a user of the system or not, there should be rules about how people can access their own data. These protocols should clearly state who can see the data and when. Only people who need to see an individual's data should be able to do so. This requirement is closely linked to the idea of transparency and includes the data, the system, and the business models that make up an AI system.

Transparency

Transparency, in general terms, is the quality of being easily seen through. In terms of AI, it includes making the data, the system, and the business models associated with the system clear to all.

The AI system's decisions should be documented in the best way possible, including the data sets and processes that led to them, and the algorithms that were used. This makes it easier for people to see how the AI system made its decisions, came to its conclusions, thus making it transparent. When an AI decision is wrong, It also helps to determine why and help to avoid making the same mistake again. Similarly, traceability makes it easier to audit and explain.

Explainability is the ability to explain both the technical processes of an AI system and the human decisions that go along with them (e.g. application areas of a system). AI systems must be able to make decisions that can be explained and traced by humans. There may also be trade-offs to be made between making a system more explainable (which may make it less accurate) and making it more accurate (which may make it less explainable).

When an AI system has a big impact on people's lives, they are entitled to get an acceptable explanation of how the AI system made its decisions. Such an explanation should be timely and tailored to the level of knowledge of the individual (e.g. layperson, user, or regulator).

In addition, explanations of how an AI system affects and shapes the way an organization makes decisions, how the system was built, and why it was used should be available, hence ensuring business model transparency.

https://www.youtube.com/watch?embed=no&v=3wLqsRLvV-c&t=6

AI systems should not make users think they are humans. People have the right to know that they are interacting with an AI system. This means that AI systems must be able to be recognized as such. In addition, people should be able to choose not to have this interaction in favour of human interaction when it is necessary in order to protect their basic rights.

Other than that, the AI system's abilities and limitations should be communicated to AI practitioners or end users in a way that fits the scenario purpose. This could include informing people of how accurate the AI system is, as well as what it is unable to do.

Diversity and non-discrimination

In order to make AI that is trustworthy, it is necessary to ensure that everyone has a chance to be a part of the AI system's life cycle. This also means that everyone who is affected by the project should be considered and involved in the process. Additionally, everyone involved should have equal access to the project and be treated in the same manner. This rule is closely linked to the idea of fairness.

It is possible that data used by AI systems (both for training and when they are operational) could have historical bias, be incomplete, or be subject to bad governance. There could be unintended (indirect) prejudice, discrimination against, and marginalisation of certain groups of people if these biases remain within the data set.

Consumer biases can also be used to harm people intentionally. Unfair competition, such as collusion and a non-transparent market are key examples. It is important to remove any discriminatory or identifiable bias when possible during the collection phase, if it is possible to do so. In order to avoid this, there should be oversight processes in place that look at the system's purpose, constraints, requirements, and decisions in a clear and open way. In addition, hiring people from different backgrounds, cultures, and fields can assist in helping to filter out the biases, and this should be actively encouraged.

https://www.youtube.com/watch?embed=no&v=59bMh59JQDo

Users should be the focus of systems in business-to-consumer settings, and they should be designed in a way that allows everyone to use AI products or services. For example, people with disabilities need to be catered for so that they can effectively use the technology. AI systems should not have a one-size-fits-all approach and universal design principles should be applied to reach the widest possible range of users. Rules pertaining to accessibility should be adhered to and assistive technology used so that everyone can participate in computer-mediated human activities.

In order to build AI systems that are trustworthy, it is important to confer with people who will be affected by the system in some way. It is beneficial, if not essential, to glean feedback from people even after AI systems have been implemented, and to set up long-term ways for people to be involved. This can be done, for example, by giving users consultative roles in relation to the AI implementation process.

Accountability

In addition to other ethical requirements, accountability is similarly very pertinent, and it is linked to the idea of fairness. It is important that there are methods for confirming that AI systems and their results are subject to accountability and responsibility both before and after they are built, deployed, and used.

https://www.evilaicartoons.com/archive/terminology-black-box

Auditability refers to being able to check algorithms, data, and design processes, however this does not mean that information about the AI system's business models and intellectual property must always be made public. Internal and external auditors, as well as the availability of their evaluation reports, can help assure the trustworthiness of the technology. Where applications affect basic rights, such as in safety-critical scenarios, AI systems should be subject to appropriate and relevant checks, and any system must be able to report decisions or actions that lead to a certain system outcome, as well as what happens as a result.

AI systems can have negative consequences for people who are not directly impacted by them, so it is important to find, assess, document, and try to minimize such occurrences. For example, whistleblowers, NGOs, and trade unions should be able to get the protection they need when they report problems with an AI system. The negative impacts become apparent if AI systems are not planned and used appropriately, so impact assessments (like red teaming or other types of Algorithmic Impact Assessment) can help to eliminate or minimize this. In order for these assessments to be fair, they should be based on how much risk the AI systems pose to people.

A red team or team red are a group that plays the role of an enemy or competitor to provide security feedback from that perspective. Red teams are used in many fields, especially in cybersecurity, airport security, the military and intelligence agencies.

When formulating the above requirements, there may be conflicting concerns, which may lead to trade-offs as a middle ground. Such trade-offs should be dealt with in a rational and methodical way and the AI system should be able to identify the interests and values that it may affect. In such instances, trade-offs should be explicitly acknowledged and evaluated in terms of their risk to ethical principles, including basic rights. There should be a valid justification for any decision made about which trade-off to make, and the person who makes the decision must accept responsibility for making it. The onus also lies with them to ensure that the system can be changed if necessary.

Further readings

EvilAICartoons

EvilAICartoons "aims to educate and stimulate discussion about the societal impacts of Artificial Intelligence through the cartoon/comics medium. Each cartoon is accompanied by a brief blog post that provides more context and useful pointers to further reading. By better understanding AI risks, we can reduce our anxiety about the technology, and embrace all the benefits it offers to humanity."

Stanford Encyclopaedia of Philosophy

This contains an article entitled "Ethics of Artificial Intelligence and Robotics". It discusses how "Artificial intelligence (AI) and robotics are digital technologies that will have significant impact on the development of humanity in the near future. They have raised fundamental questions about what we should do with these systems, what the systems themselves should do, what risks they involve, and how we can control these."

MoralMachine

"We show you moral dilemmas, where a driverless car must choose the lesser of two evils, such as killing two passengers or five pedestrians. As an outside observer, you judge which outcome you think is more acceptable. You can then see how your responses compare with those of other people.

If you are feeling creative, you can also design your own scenarios, for you and other users to browse share and discuss"

Ethics Guidelines for Trustworthy AI

These guidelines have been developed by a group of independent experts commissioned by the European Commission.Why is ethics crucial in the development of AI?