Image recognition

In this section we want to bring together a series of applications, which base their operation on Artificial Intelligence. These are developments that have undergone a very significant evolution in recent years, and which are particularly important due to their potential for direct interaction with human beings.

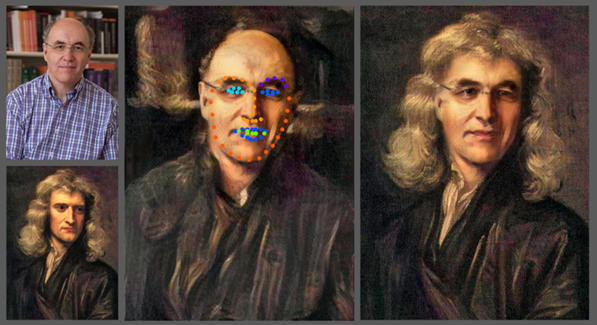

The general public is already familiar with some examples of the possibilities they offer. The term Deep Fake, mobile apps like Faceapp or Fakeapp, famous videos of personalities saying things they would never have said, commercials starring celebrities who have long since passed away. The list of cases would be endless.

Source: https://upload.wikimedia.org/wikipedia/commons/4/4c/Sw-face-swap.png

It is foreseeable that these applications will continue to arrive and expand their use in the market in the coming years. They will be the most socially visible face of the Artificial Intelligence revolution, and measures must be taken to avoid potential fraud and to educate the public in the ability to be critical of content that may appear to be authentic.

However, the technologies behind these examples, as well as others dedicated to natural language and human voice processing, are tools with enormous potential for growth in a wide range of applications and sectors.

Teaching a computer how to "see" is no easy task. For a computer to actually "see" the world as people or animals do, it needs the help of image recognition software.

Image recognition technology is the technological process by which software is able to process images in order to transform that information into data relevant to humans.

This technology has a multitude of applications in our daily lives since, thanks to image recognition, we are able to make technology "communicate" with us.

Thus, an image recognition programme acts like the part of the human brain that processes the information received by the eyes, but there is a big difference between getting a computer to recognise a specific image, with predetermined characteristics, such as a barcode or a QR code, and recognising objects or shapes in unexpected states and with a very high variability, such as a traffic sign or a face.

Image recognition in the latter case is usually achieved by creating a neural network that processes the individual pixels of an image. These neural networks are fed a large number of images in order to "teach" them how to recognise similar images.

Thus, an AI will be able to recognise objects or shapes it detects in an image, if it has been trained to do so. This could be characters printed on a piece of paper for translation, our face to unlock a device, or the road signs that an autonomous vehicle "sees".

Source: https://www.youtube.com/watch?v=Wq2ay3_bNuw

This development is enormously useful for all systems that, like robots, need to quickly and accurately recognise and categorise different objects in their environment. A good example is driverless vehicles, which use computer vision and image recognition to identify pedestrians, road signs and other vehicles. But this is also the case for much other applications. Let us look at two examples:

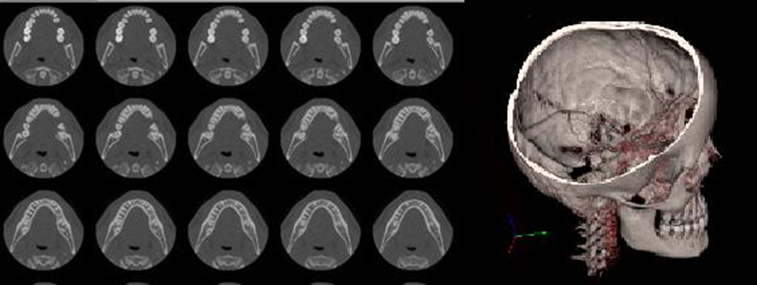

- Making medical diagnoses

Systems trained through deep learning learn to identify disease patterns by being fed with thousands of images from real cases, such as CT scans or MRI scans.

The development of medical diagnoses using these systems offers enormous potential to improve the accuracy and speed of diagnosis, enabling disease prediction and early treatment.

- Wind turbine maintenance

Having to inspect wind turbines for maintenance is a difficult task due to their size, as they can be up to 120 metres high, and the turbines span a 22-storey building.

These inspections are very tedious to carry out by people, and it meant that each wind turbine had to be shut down for days at a time.

The aim is to detect marks on the metal surfaces that indicate deterioration, but it is no longer necessary to climb up to the blades or photograph them with telescopes. Drones now capture approximately 400 images of the three blades in 20 minutes, and it is an image recognition system, based on Artificial Intelligence, that detects which marks indicate deterioration that requires maintenance, with greater accuracy than a human inspector is capable of.

Image generation

When it comes to image generation, AI also has a lot to say. We have always regarded creativity as an intrinsically human quality, which a machine could not perform. The reality is that Artificial Intelligence is proving that much remains to be written and that we can take nothing for granted.

Machine learning systems, based on "generative adversarial networks", have been with us for some years now. You may already be familiar with websites such as This Person Does Not Exist, which show how these systems are able to generate very realistic and fake images of people's faces.

There are other examples that you may not know about, that you can easily try out for yourself, and that you may find interesting:

It is an Artificial Intelligence system from NVIDIA that allows the generation of a landscape image from a sketch that indicates the elements that you want to visualise. Each element, such as a tree, sky, mountains, water, etc., corresponds to a colour. In addition, it is also capable of generating landscape images from a descriptive text.It is the demonstration of how other systems of artificial generation of images by means of Deep Learning, these so-called "diffusion models", are reaching another level.

The same system is capable of:

- Colouring images: from the greyscale image, a colour version is generated.

- Inpainting (gap-filling), i.e. filling in parts of an image.

- Uncropping: enlarging the frames of an image, either to the right, left, top or bottom. The AI creates the rest, thus generating a new image.

Source: https://iterative-refinement.github.io/palette/videos/palette_movie_v2.m4v

- Dall-e

This is an OpenAi AI model trained to generate realistic images from any description given as text to the system, thus being able to create objects that we imagine. It is an example of the possibilities offered by the combination of Natural Language Processing (which we will see later) and generative models.

Do you want to see a lamp in the shape of an avocado, or a green stop sign...?

Try Dall-e.

As for video generation, deepfakes have already established themselves as a creative resource in advertising and marketing. Bruce Lee, Steve McQueen, Audrey Hepburn or Martin Luther King are just some of the people who have been the subject of deepfake videos used in commercials.

These are cases in which the videos have been created legally, with the necessary permissions, and even with the collaboration of the heirs of these people.

However, there have also been many cases of these techniques being used for identity theft for malicious purposes: extortion, generation of hoaxes, etc.

Source: https://www.youtube.com/watch?v=nWoL4iKGzTQ

The rise of these negative manifestations of Artificial Intelligence has led institutions around the world to devise or propose rules for the use of the technology. For example, the European Parliament has already concluded that these methods may have associated risks "of a psychological, financial and social nature, with impacts ranging from the personal to the collective".

While we will need to adopt rules to prevent their malicious use, for businesses, video generation presents a new production model, where physical presence is not essential and access to reference characters can become more affordable. Providers such as Synthesia offer video creation at a hundredth of the cost, and much faster, as they only need text or a recording to manipulate facial movements from stock footage.

Natural language processing

Natural language processing is a field within Artificial Intelligence and linguistics that studies interactions between humans and machines using natural language.

It focuses on processing human language, breaking it down into parts, and identifying the most relevant elements of the messages it conveys. The aim of Natural Language Understanding and Generation is to enable machines to understand, interpret and manipulate human language.

Source: https://www.youtube.com/watch?v=IIaYk2hIYKk

Natural language processing is powered by Machine Learning.

A Machine Learning model is fed with a "corpus" of text or spoken language, i.e. with words, phrases, sentences and complete texts that are used to train it.

Thanks to this training, the model can learn to perform various types of analysis on the text to be processed. After preparing the text, dividing it into smaller parts, and eliminating irrelevant parts, it will carry out different types of analysis depending on the purpose of the model in question. These analyses can be:

Morphological: to distinguish different types of words (verbs, nouns, prepositions, etc.) and their variations (gender, number, tense, etc.).

Syntactic: separates sentences from each other and analyses their constituent parts (subject, verb, predicate) in order to extract their meaning. The identification of grammatical structures allows it to extract the relationships that exist between the different parts of the texts.

Semantic: analyses the meaning, not only of individual words, but also of larger sections, such as the sentences of which they form part, and of the discourse as a whole. The detection of semantic connections between different entities in a text is an essential step in NLP because it gives meaning to language.

Pragmatic: extracts the intention of the text. It makes it possible to differentiate factors such as irony, ambiguity or mood.

Virtually any human language can be treated by Natural language processing models. Logically, constraints of economic or practical interest mean that only the most widely spoken or used languages in the digital world have applications in use.

Different combinations of these processes can be used to create different applications which, depending on their purpose, will need to incorporate additional processes. For example, an application that transcribes voice recordings into text will not need to perform semantic analysis, but will need to incorporate a spoken language recognition process. However, an advanced chatbot service will require semantic analysis, as meanings will be very relevant in the development of a conversation.

What applications of this technology may be of practical interest to businesses? Let's look at some examples:

- Automatic translations: These have made great progress in recent years. Translators such as Google Translator or DeepL are very popular, and there are some videoconferencing services that are already considering real-time translation in the near future.

- Text classification: Allows the assignment of predefined categories to text documents. Does your company have a large number of documents and would it be useful to classify them according to their content? Thanks to the application of keywords, information can be categorised for more efficient retrieval.

- Text summaries: As with classification, summarising a large document is based on certain key words or phrases.

- Virtual assistants and chatbots: This is the case of Siri, Alexa or Google Nest. Thanks to natural language processing, we can order them to act on devices, to provide us with information, or to save the information we want, such as a reminder or a shopping list. They analyse the words they hear to carry out the corresponding tasks.

- The chatbots found on many websites also react to keywords they identify in users' messages to provide the most appropriate information. They are very useful for dealing with simple and frequent queries.

- Text autocorrection functions: They allow for faster and less error-prone writing. They not only identify spelling and grammatical errors. They can also suggest alternative texts depending on the style you want to follow, or set phrases to be added quickly.

- Transcription of spoken language into text: Need to reflect the content of a meeting or interview in a document? You no longer have to write it all down, there are automatic transcription web services.

- Translation of written text into spoken language. Very useful for the visually disabled. It allows texts to be translated into audio that recognises the separation of words, pauses that grammatical signs add to a speech and even the appropriate intonation.

All these applications are already in common use, but Natural Language Processing technology has undergone a great evolution in recent years and is probably one of the applications of Artificial Intelligence that will be most developed in the near future.

Proof of this is the evolution of models such as GPT, created by the company OpenAI. The latest version, GPT-3, is capable of conversing on almost any subject, but also of programming or performing calculations, even though it was not specifically trained to do so.

If you wish, you can test the capabilities of GPT3 yourself, as the company allows the use of a general-purpose interface for users to try it out on almost any task in English: https://openai.com/blog/openai-api/.

If you prefer, you can watch the following video as two AIs (GPT-3) talk about what it is like to be an AI:

Source: https://www.youtube.com/watch?v=JcAY-ae2Drw

Other developments such as Open AI Codex, or more recently DeepMind's AlphaCode are specialised in programming, i.e. they are able to write code in programming language from the instructions they receive in natural language.

For the time being, these are not models capable of programming extensive codes on their own, but they already serve as a support for many programmers to code parts of their programmes. It will certainly be interesting to follow the development of this technology.

Virtual and Augmented reality

Virtual reality (VR) and augmented reality (AR) are undoubtedly key technologies of this century. Both have the potential to change the way we interact with the digital world.

Source: https://www.youtube.com/watch?v=vz0UUVDt2ps

Virtual reality consists of sensory immersion in a fictitious world, based or not on real environments, generated through computer technology. This environment can be perceived through virtual reality glasses, and its accessories, such as headphones, gloves, etc.

This represents a before and after in the way we access multimedia content for leisure, such as films, documentaries, concerts, video games, but it also has multiple applications in the business and work environment.

VR seems very contemporary, but it was in the mid-1950s that the first machine called the Sensorama was invented. It had a seat, generated vibrations and emanated smells, to make the experience as exciting as possible. Since then, the evolution of virtual reality has been exponential.

Some of the many applications it currently has are as follows:

- Reconstruction of cultural heritage: many monuments that are destroyed or degraded can be visited as if they were in perfect condition, thanks to virtual reality.

- Media: Immersive journalism transports the user to the scene of events with 360° videos broadcast live via streaming.

- Education: In classrooms, the use of VR enables students to retain knowledge better and helps students with learning difficulties.

- Medicine: to make a 3D recreation of the patient, thus facilitating diagnosis or even simulating surgical interventions to be performed.

Source: https://www.fib.upc.edu/retro-informatica/avui/realitatvirtual.html

- Industry 4.0: VR offers the opportunity to train people in complex or dangerous tasks before they have to perform them in the real world. This saves costs and greatly increases material and personal safety.

- Architecture: it offers the possibility of seeing a recreation of the house before it is built, getting to know its spaces in greater detail. In addition, when decorating your home, you will be able to see different designs before choosing the final one, observing it in a very real way.

Augmented reality consists of adding layers of visual information such as objects or images to the real world, combining the latter with the virtual world. But in this case we do not necessarily need virtual reality glasses, as our own smartphones can be enough. Thanks to augmented reality, our own world becomes the medium where we interact with virtual objects of all kinds. Everything we see is referenced in a real environment. The clearest and most mainstream example of this concept is Pokémon Go.

Like VR, AR has multiple applications. Some examples:

- Industry: For example, AR allows additional information to be shown to an operator who is monitoring an installation and gives access to other people to work remotely and in real time on the same installation. It also helps train staff in the use of the new devices.

- Medicine: In the medical sector, augmented reality offers solutions that help, in real time, to visualise the internal parts of the patient by projecting 2D or three-dimensional images, showing in depth the different organs to be treated and helping to make a diagnostic decision.

- Education: With augmented reality, teachers have a tool that can be applied to any subject and can even be integrated into traditional textbooks. With this technology, young people are discovering a new way of recognising reality.

- Google maps: this application gives us information on how to get to any place we want. In addition, with Live View, it projects the directions step by step on our surroundings.

- Translation: Systems such as Google translate allow us to make translations into the language we want from a photo or image captured by a camera in real time.

- Clothing and furniture testers at home: Thanks to augmented reality, companies such as Ikea have developed applications that allow you to see the products in the catalogue on a real scale in the place in your home where you want. This facilitates the purchase of products by making it easier to try on different models, styles and colours without having to physically hold the products.

Source: https://www.youtube.com/watch?v=EIJM9xNg9xs&t=209s

The progress of augmented reality is closely linked to Artificial Intelligence, as many of its applications are fuelled by AI-driven analysis of images and data about the environment. As a result, these two technologies are becoming increasingly synergistic.

Much of the data extracted from the user's interactions with the environment can be used by AI to make valuable estimates for the user, such as measuring distances between points, predicting faults in an installation or managing tasks to be performed, all of which can be used as a means to improve the user experience.